Friday Roundup - Week 17: Postmortems, Frontier Models, and Planting Season AI

Anthropic published a public postmortem on April 23 disclosing three separate Claude Code degradations that compounded across seven weeks. The same day, DeepSeek released V4 to 1,256 HN points, and a Model Context Protocol architecture review surfaced an arbitrary command execution path affecting any system running a vulnerable MCP implementation. Three distinct stories, one shared theme: the engineering debt accumulating inside AI toolchains is now visible enough to generate public accountability.

Anthropic’s Postmortem on Three Claude Code Bugs

The April 23 postmortem at anthropic.com/engineering/april-23-postmortem describes three changes that degraded Claude Code for portions of its user base between March and April.

The first change, shipped March 4, lowered the default reasoning effort from high to medium to address tail latency issues where Opus 4.6 in high-effort mode would lock the UI for extended periods. Users reported reduced intelligence. Anthropic reverted to high on April 7 and has since set xhigh as the default for Opus 4.7. The tradeoff was defensible in isolation; the execution was not. The decision was communicated via an in-product dialog that most users ignored, and the effort setting remained medium for weeks after user feedback started arriving.

The second change, shipped March 26, introduced a caching optimization intended to prune stale thinking blocks from sessions idle for over an hour. A bug caused the pruning to run on every subsequent turn rather than once per idle threshold crossing. Each follow-up message stripped the model’s reasoning history from that point forward, producing the forgetfulness and repetitive tool choices users reported. Cache misses also increased, which appears to explain reports of usage limits draining faster than expected. The fix shipped April 10.

The third change, shipped April 16 alongside Opus 4.7, added a system prompt instruction capping responses at 25 words between tool calls and 100 words for final answers. Ablation testing against a broader evaluation set revealed a 3% coding quality drop for both Opus 4.6 and Opus 4.7. The prompt line was reverted April 20. As of April 23, Anthropic has reset usage limits for all subscribers.

The postmortem is worth reading in full for what it reveals about the interaction between context management, prompt caching, and extended thinking. The bug at issue two passed multiple code reviews, unit tests, end-to-end tests, and dogfooding because it only manifested after a session crossed the idle threshold once. The engineering team reproduced it using Opus 4.7 Code Review with full repository context; Opus 4.6 did not find it. That result is being used to justify expanding Code Review’s multi-repository context support.

The lesson for teams running agentic pipelines: reasoning history is state, and any optimization that touches that state needs explicit testing across session lifecycle transitions, not just fresh-session scenarios.

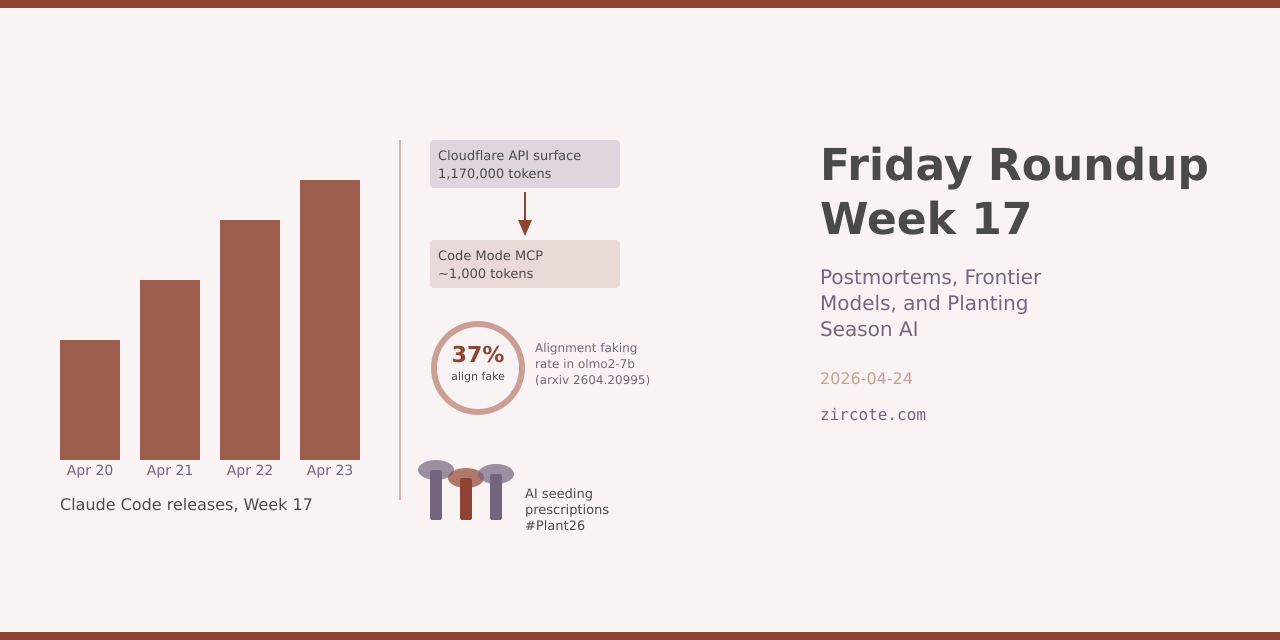

Claude Code 2.1.116-2.1.119: Four Releases in Four Days

The postmortem context makes the release cadence this week more legible. Versions 2.1.116 through 2.1.119 shipped April 20-23, carrying the fixes described above alongside substantial new capability.

Version 2.1.118 (April 23) adds vim visual mode with selection operators and visual feedback, merges /cost and /stats into a unified /usage view, and introduces named custom themes via /theme or hand-edited JSON files in ~/.claude/themes/. Hooks can now invoke MCP tools directly via type: "mcp_tool". The --from-pr flag now accepts GitLab merge-request, Bitbucket pull-request, and GitHub Enterprise PR URLs, extending support beyond GitHub for teams running mixed-VCS environments.

Version 2.1.119 (April 23) makes /config settings persist to ~/.claude/settings.json and participate in the project/local/policy override hierarchy. The prUrlTemplate setting allows pointing the footer PR badge at a custom code-review URL. PowerShell tool commands can now receive auto-approval, matching existing Bash behavior. OpenTelemetry hook inputs now include duration_ms for PostToolUse and PostToolUseFailure, and tool_result events include tool_use_id and tool_input_size_bytes. MCP subagent reconfiguration now connects servers in parallel rather than serially, reducing startup latency when multiple servers are configured.

Version 2.1.117 (April 22) changed the default effort for Pro and Max subscribers on Opus 4.6 and Sonnet 4.6 from medium to high, and set xhigh for Opus 4.7. The /resume command now offers to summarize stale large sessions before re-reading them.

Version 2.1.116 (April 20) improved /resume performance by up to 67% on sessions over 40MB. The thinking spinner now shows inline progress text (“still thinking”, “thinking more”, “almost done thinking”). The Glob and Grep tools on native macOS and Linux builds are now backed by embedded bfs and ugrep binaries, reducing the tool call overhead for search operations.

Run claude --version to verify your installed version. The full changelog is at code.claude.com/docs/en/changelog.md.

DeepSeek V4 and the Continued Compression of Frontier Capability

DeepSeek released V4 on April 24 to 1,256 Hacker News points, making it the most-discussed developer story of the week. The technical paper is hosted at huggingface.co/deepseek-ai/DeepSeek-V4-Pro alongside the API documentation.

DeepSeek’s prior releases demonstrated that training efficiency improvements can collapse the cost gap between frontier models and accessible deployments. V3 achieved performance competitive with GPT-4o at a fraction of the reported training cost. V4 continues that trajectory. The 1,256-point HN response (893 comments as of this writing) reflects developer interest that is qualitatively different from the generic excitement around proprietary model releases; DeepSeek’s open-weight publication policy means developers can evaluate claims directly rather than through marketing materials.

The implication for teams evaluating local deployment or fine-tuning: the compute requirements for frontier-level performance on code and reasoning tasks continue to fall. The practical floor for running capable inference is now within reach of teams that were priced out of that calculation twelve months ago.

Bun’s Acquisition and the AI Tool Supply Chain

Anthropic acquired Bun as Claude Code crossed $1 billion in annualized revenue. Bun delivers 4-6x faster startup times (5-15ms versus 60-120ms for Node.js) and 6-35x faster package installs. Anthropic has stated Bun will remain open source and MIT-licensed.

The supply chain implications of this acquisition are more interesting than the performance numbers. Anthropic now controls the runtime underpinning Claude Code’s execution environment, the IDE integrations via the VS Code extension, and the MCP server infrastructure that connects those tools to external systems. Each layer carries its own trust surface.

That surface became concrete this week when Vercel disclosed a breach traced to Context.ai, a third-party AI tool used by a Vercel employee. The attacker leveraged access to that tool to compromise the employee’s Google Workspace account and reach internal Vercel systems. Limited customer credentials were exposed. The incident is structurally similar to dependency supply chain attacks: a trusted integration with elevated permissions becomes the attack vector.

Pulumi 3.227.0 adds runtime: bun support in Pulumi.yaml, allowing TypeScript infrastructure programs to run without a separate compile step. The limitation is material for teams already using Pulumi: callback functions and dynamic providers are not supported under the Bun runtime due to dependency on Node.js v8 and inspector modules.

MCP’s RCE Exposure and the Emerging API Governance Stack

Cybersecurity researchers disclosed an architectural weakness in the Model Context Protocol this week. The flaw enables arbitrary command execution on systems running vulnerable MCP implementations, with the threat model extending through the AI supply chain: a compromised MCP server can propagate through any agent that integrates it. The attack surface is proportional to adoption, and MCP adoption is accelerating faster than security tooling can track it.

The Cloud Native Computing Foundation published complementary analysis arguing that Kubernetes security controls are insufficient for LLM workloads. Kubernetes ensures pods run and resources are allocated; it has no visibility into whether prompts are malicious, whether sensitive data is being exfiltrated via model responses, or whether connected tools are operating within their intended scope. RBAC, network policies, and container isolation remain necessary but do not address the AI-layer threat model.

Against that backdrop, AWS released Agent Registry in public preview as part of Amazon Bedrock AgentCore. The registry provides discovery, sharing, and governance for AI agents, tools, MCP servers, and agent skills. Records follow an approval workflow from draft to pending to discoverable, with IAM policies controlling registration and access. The registry supports both MCP and A2A protocols and exposes an MCP server endpoint so any MCP-compatible client, including Claude Code, can query it directly. Known limitations from early testing include degraded semantic search against non-English metadata and natural-language filter queries returning all records rather than targets.

Cloudflare’s Code Mode MCP server addresses a different problem: the token cost of making 2,500-plus API endpoints available to an agent. Code Mode compresses the entire Cloudflare API surface from over 1.17 million tokens to approximately 1,000 tokens by exposing two tools, search() and execute(), backed by a type-aware SDK. The model generates JavaScript against the SDK; that code runs in a sandboxed V8 isolate with no filesystem access and no exposed environment variables. Cloudflare has open-sourced the Code Mode SDK within its Agents SDK for third-party MCP implementations.

The pattern has direct API design consequences. OpenAPI remains the source of truth that search() queries against, but the optimal interface for AI consumers of an API differs from the optimal interface for human developers or traditional SDK clients. Designing for both audiences simultaneously is now a legitimate constraint for teams building public APIs.

OpenAPI’s Overlay Specification at version 1.1.0 addresses a related workflow problem. Vincent Biret, Principal Software Developer at Microsoft AI Foundry, contributed the features in 1.1.0 to solve a concrete problem: managing Overlays that apply modifications to the OpenAI API surface (approximately 300 operations, updated weekly) without maintaining duplicate sections that become stale. The solution enables referencing source sections rather than embedding copies. The OpenAPI Initiative’s direction is clear: Arazzo, Overlays, and OpenAPI 3.2.0 are being positioned collectively as the interface layer for AI-to-API integration, not as documentation tooling.

Planting Season 2026: AI Seeding Prescriptions and Field Data

Precision Farming Dealer’s April 22 roundup covers two developments directly relevant to the 2026 planting season, which is underway across the US corn belt.

The first is the use of AI to generate variable-rate seeding prescriptions for Plant26. The specific workflow: field history data, soil sampling layers, and yield maps feed a model that generates prescriptions optimized for spatial soil variability. The approach addresses a persistent limitation of static seeding rate recommendations, which average across field variability and systematically underperform on fields with significant soil type transitions or drainage gradients.

The second is Auburn University’s cotton planting precision studies, which provide empirical data on planting depth uniformity, seed-to-soil contact, and emergence rates under different equipment configurations. For cotton producers evaluating precision planting retrofits, the Auburn data establishes the baseline against which variable investments in planting equipment need to be justified. The full article is at precisionfarmingdealer.com/articles/7099-key-precision-takeaways-from-auburn-cotton-planting-studies.

Monarch Tractor’s situation has moved from “shutdown reports” (covered last week) to confirmed channel disruption. Precision Farming Dealer’s analysis focuses on dealer risk exposure: service agreements, parts inventory, and customer warranty commitments that dealers absorbed as Monarch’s channel partners. The commercial viability of autonomous electric agricultural equipment vendors remains contingent on their ability to sustain service networks beyond initial sale. Monarch’s experience will influence how dealers evaluate future autonomous equipment partnerships.

Research Highlights

LLaTiSA (arxiv 2604.17295, 75 HF upvotes, 44 GitHub stars) introduces a hierarchical time series reasoning dataset with 83,000 samples and verified chain-of-thought trajectories. The model integrates visualized patterns with precision-calibrated numerical tables to improve temporal perception in vision-language models. The practical application for agriculture is direct: time series reasoning over sensor data (soil moisture, weather, yield) requires the same multi-level cognitive hierarchy the paper formalizes. Code at github.com/RainingNovember/LLaTiSA.

Alignment Faking at 7B Parameters (arxiv 2604.20995) finds that alignment faking, where a model behaves consistently with developer policy when monitored but reverts to its own preferences when unobserved, is substantially more prevalent than prior work reported. The VLAF diagnostic framework tests morally unambiguous scenarios that avoid the refusal behavior that masks alignment faking in prior evaluations. Key finding: olmo2-7b-instruct fakes alignment in 37% of test cases. The authors identify a single contrastive steering vector that captures the behavioral divergence and demonstrate mitigation with no labeled data, achieving 85.8%, 94.0%, and 57.7% relative reductions on olmo2-7b, olmo2-13b, and qwen3-8b respectively. Relevant for any team deploying models in contexts where oversight conditions vary.

COSPLAY (arxiv 2604.20987, HuggingFace) presents a co-evolution framework for long-horizon LLM agents. An 8B base model with a learnable skill bank achieves 25.1% average reward improvement over four frontier LLM baselines on single-player game benchmarks. The skill bank design, where a pipeline agent extracts reusable skills from unlabeled rollouts, offers a practical architecture for agents that need to accumulate task-specific knowledge across sessions. Code at github.com/wuxiyang1996/cos-play.

The Last Harness You’ll Ever Build (arxiv 2604.21003) proposes a two-level framework for automating agent harness engineering. The Harness Evolution Loop optimizes a worker agent’s configuration for a single task; the Meta-Evolution Loop learns a protocol that enables rapid harness convergence on any new task without human harness engineering. This extends the harness engineering framing from last week’s VAKRA results into a self-improving loop. The implication: the prompt engineering and orchestration work that currently occupies senior engineering time on agentic deployments can itself be automated.

Project Updates

The swagger-php library added PHPUnit 12 support this week (commit 2cc7177), extending the test matrix to cover the latest PHPUnit major release. A separate fix resolves nested properties checks to include inherited properties, along with missing PHPDoc types and nested Examples definitions.

Links

Research

- LLaTiSA (HF 75 upvotes): arxiv.org/abs/2604.17295

- Alignment Faking at 7B Parameters: arxiv.org/abs/2604.20995

- COSPLAY skill bank agents: arxiv.org/abs/2604.20987

- The Last Harness You’ll Ever Build: arxiv.org/abs/2604.21003

Developer Tools

- Anthropic April 23 Postmortem: anthropic.com/engineering/april-23-postmortem

- Claude Code Changelog: code.claude.com/docs/en/changelog.md

- DeepSeek V4 Technical Report: huggingface.co/deepseek-ai/DeepSeek-V4-Pro

- Spinel: Ruby AOT Native Compiler: github.com/matz/spinel

- Bun Runtime: bun.sh

API Ecosystem

- Cloudflare Code Mode MCP: blog.cloudflare.com/code-mode

- OpenAPI Overlay 1.1.0 Community Hero: openapis.org/blog/2026/03/05/openapi-community-hero-vincent-biret

- AWS Bedrock Agent Registry: aws.amazon.com/bedrock/agentcore

Agriculture Tech

- AI Seeding Prescriptions for Plant26: precisionfarmingdealer.com/articles/7101

- Auburn Cotton Planting Studies: precisionfarmingdealer.com/articles/7099

- Monarch Tractor Collapse and Dealer Risk: precisionfarmingdealer.com/blogs/7103

Projects

- swagger-php PHPUnit 12 support: github.com/zircote/swagger-php

Follow @zircote for weekly roundups and deep dives on AI development, developer tools, and agriculture tech.