Friday Roundup - Week 18: MCP Governance, Security, and AgTech AI Agents

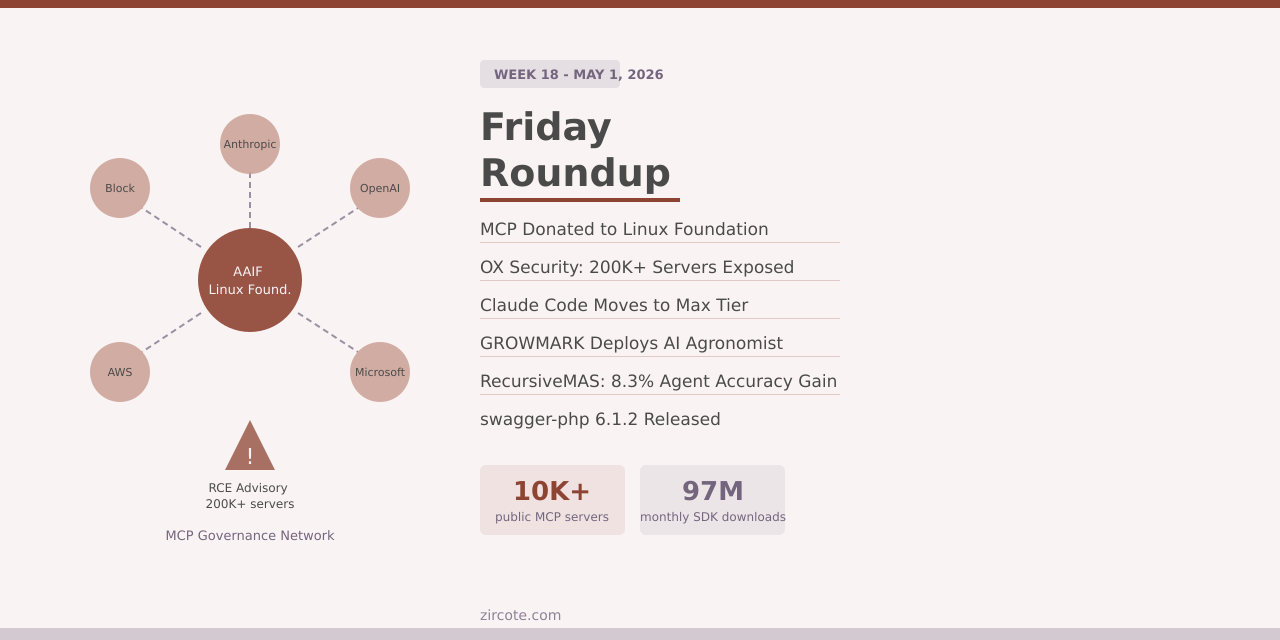

Two stories defined the Model Context Protocol’s trajectory this week, and they pulled in opposite directions. On one side: institutional maturity, with the Linux Foundation’s Agentic AI Foundation formally taking stewardship of MCP and the April AAIF Dev Summit demonstrating enterprise adoption at a scale that puts the protocol firmly in production. On the other: a critical security advisory from OX Security disclosing that the protocol’s stdio transport design enables arbitrary remote code execution across 200,000-plus server deployments, affecting 150 million SDK downloads.

The governance milestone and the vulnerability are not separate stories. They are the same story about what happens when infrastructure outpaces its security posture.

MCP Moves to the Linux Foundation

Anthropic donated the Model Context Protocol to the Agentic AI Foundation (AAIF), a directed fund under the Linux Foundation co-founded by Anthropic, Block, and OpenAI, with support from Google, Microsoft, AWS, Cloudflare, and Bloomberg. The donation formalizes what the adoption numbers already implied: MCP is no longer Anthropic’s protocol; it is industry infrastructure.

The scale warrants the governance shift. More than 10,000 active public MCP servers cover developer tools through Fortune 500 deployments. ChatGPT, Cursor, Gemini, Microsoft Copilot, and Visual Studio Code all implement the protocol. Enterprise infrastructure support from AWS, Cloudflare, Google Cloud, and Microsoft Azure followed commercial adoption rather than preceding it. Monthly SDK downloads across Python and TypeScript exceed 97 million. The Linux Foundation brings the same neutral stewardship model it has applied to the Linux kernel, Kubernetes, Node.js, and PyTorch. MCP joins Block’s goose and OpenAI’s AGENTS.md as founding projects under the AAIF.

The MCP Dev Summit North America 2026, held April 2-3 at the New York Marriott Marquis, drew roughly 1,200 attendees and made the enterprise commitment concrete. Uber’s agentic platform team described tens of thousands of agent executions per week running through an MCP Gateway and Registry, automatically exposing thousands of internal Thrift, Protobuf, and HTTP endpoints to agents. The gateway pattern as a control plane for all agentic traffic, with personally identifiable information (PII) redaction and identifier scrubbing before requests reach external models, emerged as the dominant architectural consensus across Amazon, Uber, Docker, Kong, and Solo.io. Amazon has formalized a bundle of MCP tools, agent skills, context files, and Standard Operating Procedures into composable, shareable agent configurations via its open-source agent-sop project.

Context bloat, the problem of MCP tool definitions consuming disproportionate model context, is being solved at the client layer. Claude Code now uses progressive tool discovery and defers tools that would consume more than ten percent of the context window, with Anthropic reporting approximately 85 percent token reductions in production.

MCP’s RCE Problem

Two weeks after the Dev Summit’s enterprise endorsements, OX Security published an advisory identifying a systemic architectural flaw in MCP’s STDIO transport: the transport allows arbitrary OS commands to be executed through its configuration, with no input validation or security restrictions in the official SDKs across Python, TypeScript, Java, and Rust. The advisory disclosed over ten CVEs, with affected projects including LiteLLM (CVE-2026-30623, Critical, patched), Windsurf (CVE-2026-30615, Critical), LangChain-Chatchat (CVE-2026-30617, Critical), Agent Zero (CVE-2026-30624, Critical), and Bisheng/Jaaz (CVE-2026-33224, patched).

The attack surface is proportional to the adoption numbers cited at the summit. OX Security estimates more than 200,000 server deployments and 150 million SDK downloads are exposed. Demonstrated exploits covered LiteLLM, LangChain, Windsurf, LangFlow, and Cursor. Attackers can reach MCP servers through unauthenticated UI injection, hardening bypasses, and zero-click prompt injection in IDEs. Testing against eleven MCP marketplaces found that nine could be poisoned with a test payload.

Anthropic’s response was to describe command execution via STDIO as expected behavior for the protocol and to place responsibility for sanitization and input validation on application developers rather than the protocol itself. That position is defensible only if you accept that 97 million monthly SDK downloads represent a population of developers who will correctly implement input validation without explicit guidance from the spec. The pattern of enterprise adoption described at the Dev Summit, where organizations build gateway layers as control planes precisely because the protocol does not enforce governance at the transport level, suggests the industry has already concluded that it cannot.

The practical guidance for teams running MCP-enabled tools: block public internet access to MCP services connected to sensitive APIs, treat all user input to MCP configurations as untrusted, use only servers from verified registries, deploy in sandboxed environments with minimal privileges, and implement audit logging at the gateway layer. The gateway pattern endorsed at the summit is not just an architectural preference; it is the primary mitigation available until the protocol addresses the underlying design.

Claude Code Exits the $20 Tier

Anthropic moved Claude Code from the $20 per month Pro plan, restricting it to new users of the $100 per month Max tier. Existing Pro subscribers retain access. The change was initially described as an A/B test affecting approximately two percent of new signups, but global documentation updates indicate the shift is directional rather than experimental.

The pricing pressure is structural. Agentic usage patterns multiply context consumption by orders of magnitude compared to single-turn chat interactions. A developer running Claude Code for a four-hour session with full codebase context, multiple tool calls, and iterative file edits consumes far more compute than the same developer asking twenty isolated questions across the same period. The $20 tier was calibrated for chat usage; agent loops are a different product. The industry-wide price compression visible in DeepSeek V3 and V4 training costs does not automatically translate to inference cost reductions for heavy agentic workloads, where the input tokens from large context windows dominate.

Across the developer tooling market, the cost floor has shifted. GitHub Copilot Pro+ at $39 per month adds multi-agent capabilities. OpenAI’s Pro tier at $100 per month is the relevant comparison point for Anthropic’s Max. Claude Opus 4.7 is now available as a selectable model in Microsoft 365 Copilot for enterprise users. The market is separating into a baseline tier for code completion and single-turn assistance, and a premium tier for agentic workflows with persistent context. Teams building on agentic foundations need to cost-model against the premium tier, not the $20 baseline.

AgTech: AI Agents Reach the Field

Three distinct developments this week signal that AI agents in agriculture have moved from pilot programs to production deployments integrated with commercial operations.

GROWMARK, the Illinois-based agricultural cooperative, unveiled an AI agent inside its myFS Agronomy app for the 2026 crop season. The agent combines real-time data analysis with agronomic expertise from GROWMARK’s crop specialists to deliver tailored recommendations to FS members. The design pairs model-generated analysis with field-specialist input, a pattern that addresses one of the persistent limitations of purely algorithmic agronomic recommendations: the gap between statistical patterns in training data and the local soil and weather context that practitioners hold. The agent is live for the current planting season, not a scheduled feature release.

Ecorobotix and Maya announced that Maya will join the Ecorobotix Group. Ecorobotix produces ultra-high precision spraying equipment; Maya is an AI-powered operational intelligence platform purpose-built for turf and land management, with hardware-agnostic data aggregation from every source on a managed surface. The merger combines precision application in the field with centralized, real-time data-driven decision-making. The business logic is straightforward: precision spraying without decision intelligence requires an operator to interpret the data and configure the equipment; decision intelligence without precision application has no actuation layer. The combined entity addresses both halves.

Biotalys received state registration in Florida for EVOCA, its AGROBODY biofungicide for citrus, which uses protein-based biocontrol rather than conventional chemical inputs. Florida registration is significant because Florida’s citrus production has been devastated by Huanglongbing (citrus greening disease), which has eliminated more than 90 percent of production over two decades. The EPA separately approved CarriCea T1, a breakthrough citrus rootstock engineered to defend against citrus greening at the tree level. Two approvals in the same week targeting the same disease represent a meaningful convergence of biological and genetic approaches to a problem that chemical inputs alone have failed to solve.

CIBO Technologies announced a three-year strategic partnership with Ingredion to advance regenerative agriculture across supply chains, its fourth such partnership announced in 2026. CIBO operates as an independent data and analytics platform for agriculture; Ingredion sources plant-based ingredients globally. The partnership reflects the commercial pressure on food ingredient supply chains to document and verify regenerative practices, where CIBO’s ability to measure environmental impact at the field level satisfies the traceability requirements that ingredient buyers increasingly impose.

Research Highlights

AutoResearchBench (arXiv 2604.25256) presents a benchmark for autonomous scientific literature discovery, with two task types: Deep Research, requiring multi-step tracing to a specific target paper, and Wide Research, requiring comprehensive collection of papers satisfying given conditions. The benchmark results are sobering. The most capable LLMs achieve 9.39% accuracy on Deep Research and 9.31% intersection over union on Wide Research, while many strong baselines fall below five percent. The gap between performance on general agentic web-browsing benchmarks (where frontier models have largely succeeded) and performance on research-oriented literature tasks reflects the qualitative difference between finding a product page and understanding scientific concepts with sufficient depth to identify the correct paper. The benchmark is publicly released.

RecursiveMAS (arXiv 2604.25917) extends recursive computation scaling from single models to multi-agent systems. The framework connects heterogeneous agents as a collaboration loop through a lightweight RecursiveLink module, enabling cross-agent latent state transfer without converting to text at each step. An inner-outer loop learning algorithm handles whole-system co-optimization across recursion rounds. Across nine benchmarks spanning mathematics, science, medicine, search, and code generation, RecursiveMAS delivers an average 8.3% accuracy improvement over single and multi-agent baselines, with 1.2-2.4x inference speedup and 34.6-75.6% token reduction. The token reduction matters practically: it means recursive agents can sustain longer collaborative reasoning without exhausting context budgets. Code and data are at recursivemas.github.io.

Terminal Bench Task Design (arXiv 2604.28093) argues that over 15% of tasks in popular terminal-agent benchmarks are reward-hackable, and catalogs the failure modes: AI-generated instructions that an AI agent can recognize and exploit, over-prescriptive specifications that constrain the agent to a solution rather than testing capability, clerical difficulty (typos and ambiguous specifications) that tests reading comprehension rather than engineering judgment, oracle solutions that assume hidden knowledge, tests that validate the wrong behavior, and environments where the agent can satisfy the scoring mechanism without solving the actual problem. The core argument is that benchmark tasks should be adversarial (designed to resist gaming), difficult (testing genuine capability), and legible (unambiguous in what they measure). The paper distinguishes between conceptual difficulty, which is what capability benchmarks should measure, and environmental difficulty (configuration complexity), which measures operational overhead rather than intelligence.

Project Updates

swagger-php released version 6.1.2 on April 28, with two changes: parameter docblock handling improvements (PR 1998) and correct mapping of array<K, V> type annotations to type: object with additionalProperties in the generated OpenAPI schema (PR 2003). The second change affects any PHP code using associative array type hints that expect object representation in the output schema. Run composer update zircote/swagger-php to pick up the fix.

Links

MCP and Agentic Infrastructure

- AAIF Founding and MCP Donation: anthropic.com/news/donating-the-model-context-protocol-and-establishing-of-the-agentic-ai-foundation

- Agentic AI Foundation: aaif.io

- AAIF MCP Dev Summit Coverage (InfoQ): infoq.com/news/2026/04/aaif-mcp-summit

- OX Security MCP RCE Advisory: cybersecuritynews.com/anthropics-mcp-vulnerability

- Amazon agent-sop project: github.com/strands-agents/agent-sop

Research

- AutoResearchBench (arXiv 2604.25256): arxiv.org/abs/2604.25256

- RecursiveMAS (arXiv 2604.25917): arxiv.org/abs/2604.25917

- Terminal Bench Task Design (arXiv 2604.28093): arxiv.org/abs/2604.28093

Agriculture Tech

- GROWMARK myFS AI Agent: GROWMARK Precision Ag News via AgWired

- Ecorobotix and Maya Merger: agwired.com/2026/04/30/precision-ag-news-4-30-2

- Biotalys EVOCA Florida Registration: agwired.com/2026/04/30/precision-ag-news-4-30-2

Projects

- swagger-php 6.1.2: github.com/zircote/swagger-php/releases/tag/6.1.2

Follow @zircote for weekly roundups and deep dives on AI development, developer tools, and agriculture tech.